from fastai.vision.all import *

from fastcore.all import *Compatibility Block

Check Platform

Platform & Environment Configuration

Imports

Public Imports

Private Imports

from aiking.data.external import *

from aiking.core import aiking_settings--------------------------------------------------------------------------- OSError Traceback (most recent call last) Cell In[7], line 1 ----> 1 from aiking.data.external import * 2 from aiking.core import aiking_settings File ~/rahuketu/programming/aiking/aiking/data/external.py:28 26 from bs4 import BeautifulSoup 27 import time ---> 28 import kaggle 29 from ..core import aiking_settings 30 from .utils import download_named_images File /opt/homebrew/Caskroom/miniforge/base/envs/aiking/lib/python3.9/site-packages/kaggle/__init__.py:23 20 from kaggle.api_client import ApiClient 22 api = KaggleApi(ApiClient()) ---> 23 api.authenticate() File /opt/homebrew/Caskroom/miniforge/base/envs/aiking/lib/python3.9/site-packages/kaggle/api/kaggle_api_extended.py:403, in KaggleApi.authenticate(self) 401 config_data = self.read_config_file(config_data) 402 else: --> 403 raise IOError('Could not find {}. Make sure it\'s located in' 404 ' {}. Or use the environment method.'.format( 405 self.config_file, self.config_dir)) 407 # Step 3: load into configuration! 408 self._load_config(config_data) OSError: Could not find kaggle.json. Make sure it's located in /Users/rahul1.saraf/.kaggle. Or use the environment method.

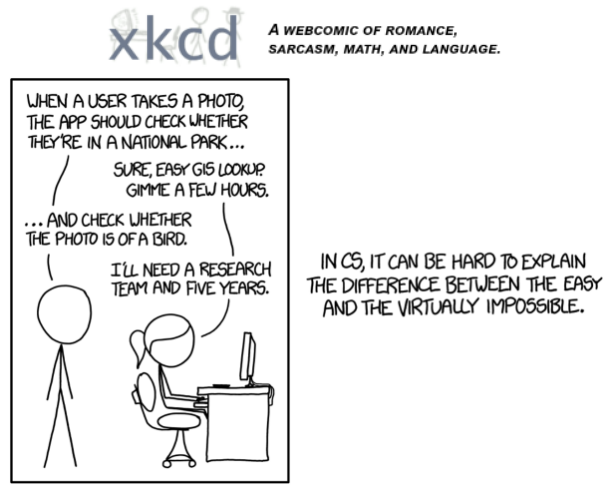

Is Bird or not?

Bird Recognition is a problem which was considered impossible to solve 5 years ago. With modern advances in software, algorithms and computing; it can now be solved in a very few lines of code on your local computer.

Problem Setup

We solve a classfication problem using image recognition algorithms and deep learning. We can easily tell if a picture is of bird. But how to teach a computer what is not bird! Best we can do is take another class of images (here - Forest) and teach the computer to distinguish them from bird pictures.

Workflow

Below is the workflow for this notebook :-

flowchart TB

subgraph Data

subgraph Create[Data Scraping and Upload to Datasette]

A[create_image_dataset] --> B[Make Sqlite db from created image.csv \n and upload to Datasette]

end

subgraph ReproducibleData

C[data_frm_datasette] --> D[Laptop]

C --> E[Kaggle]

C --> F[Colab]

C --> G[RemoteServer]

end

end

subgraph DeepLearning

subgraph Datablock

H[Define Blocks] --- I[get_items] --- J[splitter] --- K[parent_label]---L[item_tfms]

end

subgraph Learner

M[Vision Learner] ---N[fine_tune] ---O[predict]

end

end

B --> ReproducibleData

ReproducibleData --> DeepLearning

Datablock --> Learner

Download Data from Datasette

- Keeping Data reference in Datasette helps me in keeping my data consistent once its scraped initally.

- It is also useful to have notebook working on various platforms, local computer, remote GPU machine and/ or colab while interating on same dataset.

- Provide automatic api for datasets which can be used for dashboard creation, integration with observable and other 3rd party tools.

dsname = 'BirdsvsForest'

datasette_base_url = "https://datasette.zealmaker.com"path = data_frm_datasette(dsname, datasette_base_url); pathPath('/mnt/d/rahuketu/programming/AIKING_HOME/data/BirdsvsForest')

Some useful links

path.ls().map(lambda p : p.name)(#3) ['Bird','Forest','image.csv'](path/"Bird").ls()[:2](#2) [Path('/mnt/d/rahuketu/programming/AIKING_HOME/data/BirdsvsForest/Bird/018632bc-4ac6-4479-a821-3bff4d8f4919.jpg'),Path('/mnt/d/rahuketu/programming/AIKING_HOME/data/BirdsvsForest/Bird/02b56c6f-36f4-452d-8173-d97716069c7a.jpg')](path/"Forest").ls()[:2](#2) [Path('/mnt/d/rahuketu/programming/AIKING_HOME/data/BirdsvsForest/Forest/012b9515-0b1c-4feb-9353-364af6fbe946.jpg'),Path('/mnt/d/rahuketu/programming/AIKING_HOME/data/BirdsvsForest/Forest/01940593-c864-4241-8329-718bac60fed6.jpg')]DataBlocks and DataLoaders

- Dataloaders -> object that contains training and validation set

- Datablock -> Fastai object to create Datablock

doc(ImageBlock)ImageBlock

ImageBlock(cls:fastai.vision.core.PILBase=)

A `TransformBlock` for images of `cls`

doc(CategoryBlock)CategoryBlock

CategoryBlock(vocab:collections.abc.MutableSequence|pandas.core.series.Series=None, sort:bool=True, add_na:bool=False)

`TransformBlock` for single-label categorical targets

dls = DataBlock(

blocks=(ImageBlock, CategoryBlock),

get_items=get_image_files,

splitter=RandomSplitter(valid_pct=0.2, seed=42),

get_y=parent_label,

item_tfms=[Resize(192, method='squish')]

).dataloaders(path, bs=32)

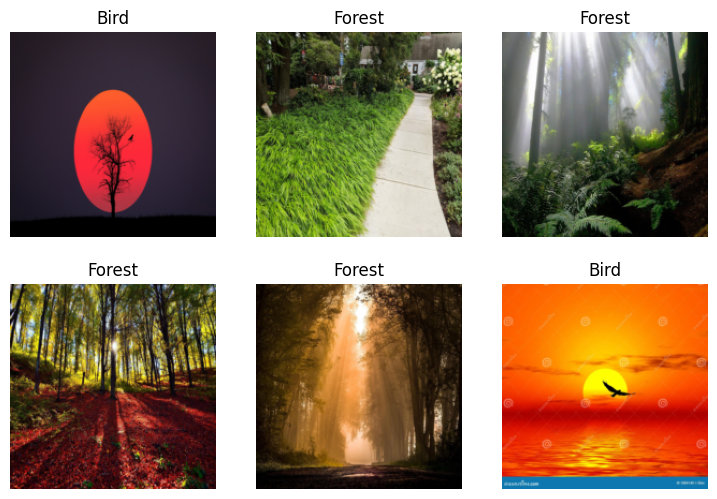

dls.show_batch(max_n=6)

Model Training

learn = vision_learner(dls, resnet18, metrics=[error_rate, accuracy]); learn/home/rahuketu86/mambaforge/envs/aiking/lib/python3.10/site-packages/torchvision/models/_utils.py:208: UserWarning: The parameter 'pretrained' is deprecated since 0.13 and may be removed in the future, please use 'weights' instead.

warnings.warn(

/home/rahuketu86/mambaforge/envs/aiking/lib/python3.10/site-packages/torchvision/models/_utils.py:223: UserWarning: Arguments other than a weight enum or `None` for 'weights' are deprecated since 0.13 and may be removed in the future. The current behavior is equivalent to passing `weights=ResNet18_Weights.IMAGENET1K_V1`. You can also use `weights=ResNet18_Weights.DEFAULT` to get the most up-to-date weights.

warnings.warn(msg)<fastai.learner.Learner>learn.fine_tune(3)| epoch | train_loss | valid_loss | error_rate | accuracy | time |

|---|---|---|---|---|---|

| 0 | 0.666977 | 0.174406 | 0.055046 | 0.944954 | 00:22 |

| epoch | train_loss | valid_loss | error_rate | accuracy | time |

|---|---|---|---|---|---|

| 0 | 0.177000 | 0.190055 | 0.055046 | 0.944954 | 00:20 |

| 1 | 0.111666 | 0.165208 | 0.064220 | 0.935780 | 00:21 |

| 2 | 0.081644 | 0.178287 | 0.045872 | 0.954128 | 00:19 |